The shift from AI hype to AI habit

AI is reimagining how organizations learn, and for many learning and development teams, AI has already become a vital assistant for building content and supporting learners. But 10% of our respondents claim they’ll never use AI. Not "we're waiting for the right time." Not "we need more budget." Never.

A fundamental divide has started. On one side: leaders are igniting momentum by modeling AI use and teams are creating courses in hours instead of weeks, to personalize learning at scale, to finally prove ROI to skeptical executives. On the other, leaders are ignoring the new technology and teams are doing the same work the same way, watching their influence shrink as other departments sprint ahead.

This report examines where AI in learning stands today, what it should look like, why you can’t afford to wait to implement it, and how to get started. Discover practical examples and gain a clear view of both the risks and opportunities ahead. Most importantly, see why L&D has the chance to lead, to drive their organizations past hesitation and use AI to reach business goals.

Executive takeaways

Most L&D teams say they want personalization, but few tie AI to business performance. Stakeholder resistance (not expertise) is the top barrier. Adoption is uneven; use cases are narrow, and L&D is often excluded from AI strategy. The fix isn’t another deck, it’s small, outcomes-tied pilots that earn trust fast.

Here are the key takeaways from our survey:

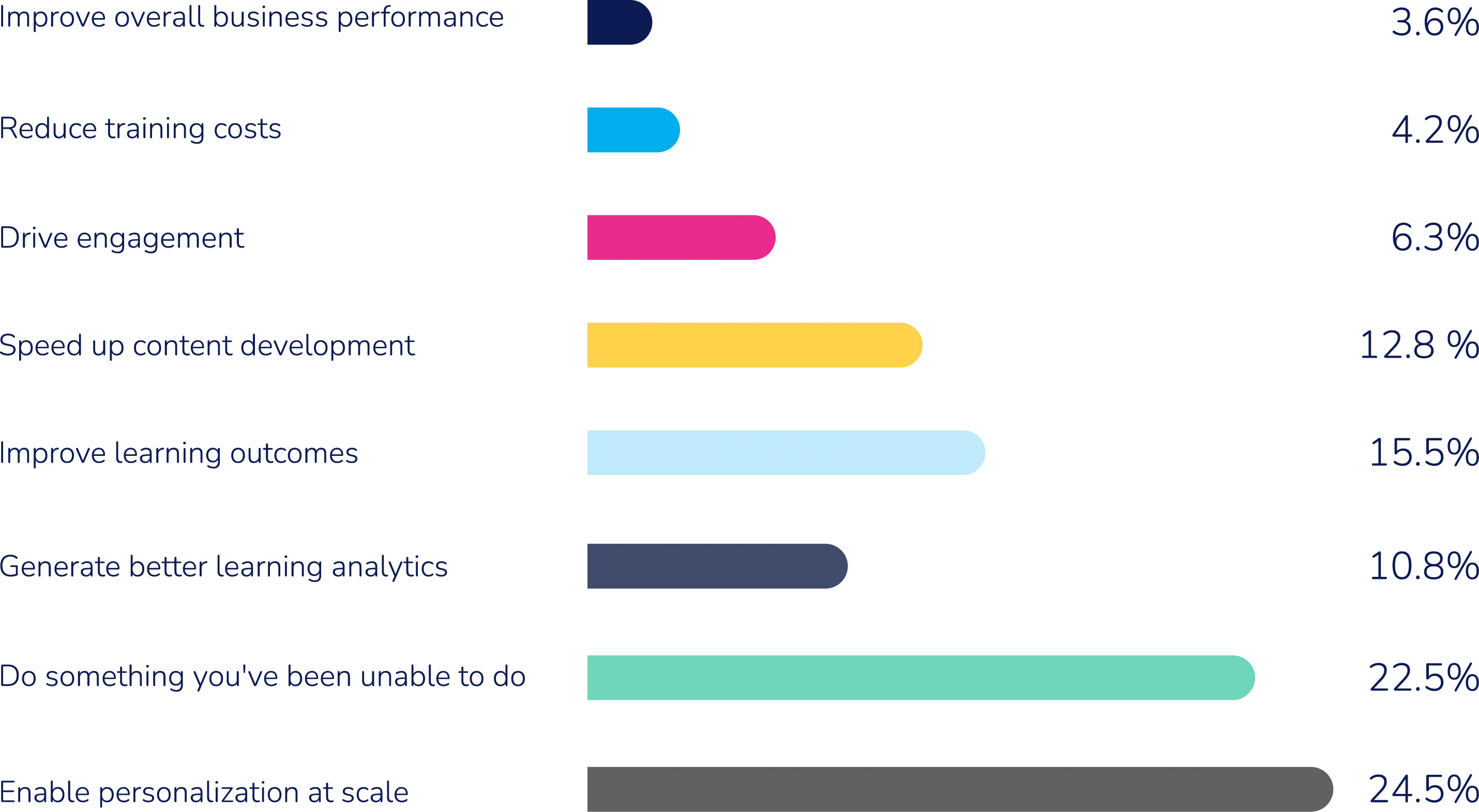

- Goals are misaligned. 25% of respondents say personalization is the top AI goal, while <4% prioritize business performance. Translation: executives ask for ROI; L&D optimizes UX.

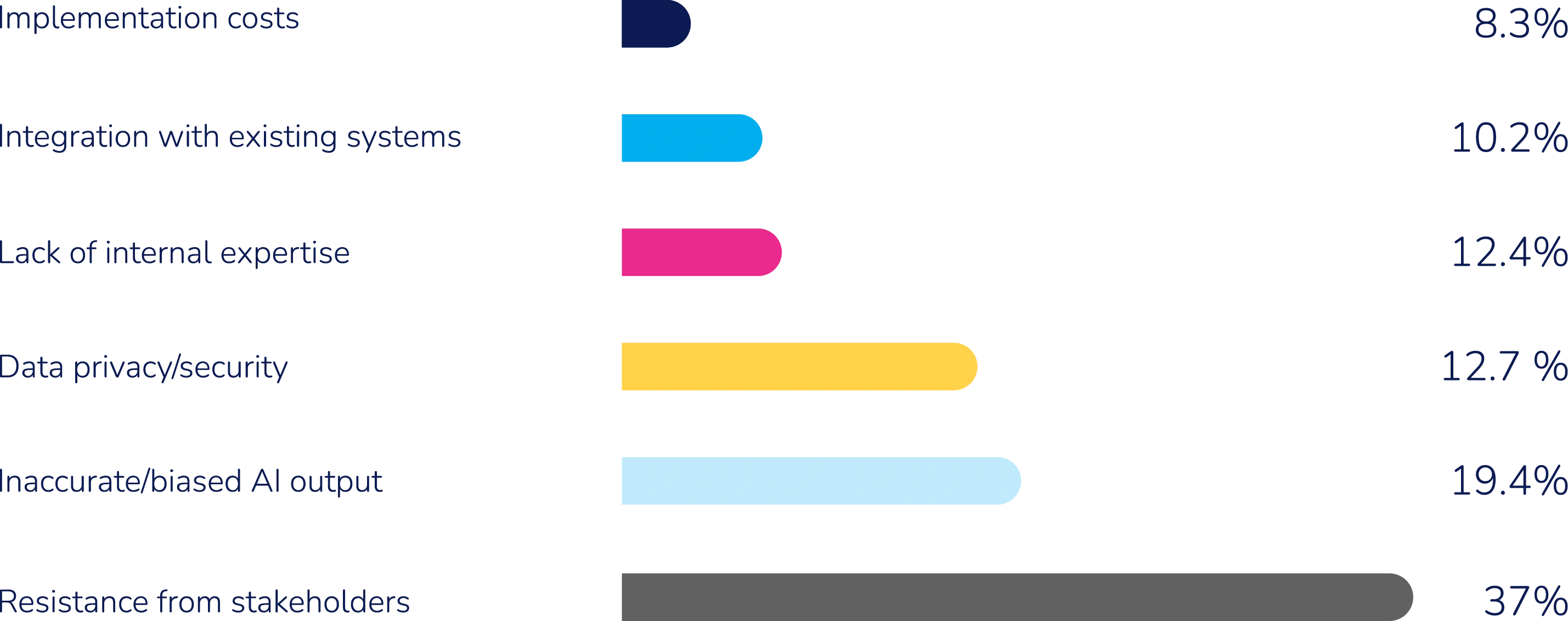

- The barrier is organizational. 37% cite stakeholder resistance as their biggest challenge; only 12% blame lack of expertise. You don’t win this with more theory, you win it with proof.

- Adoption is split. 25% started using AI in the last six months; 27% have not tried it, and of those non-users, 36% say they likely never will. The middle ground is shrinking.

- Use cases are tactical. Top: content creation (30%) and research (21%). Bottom: reporting (11%) and delivery (11%). Teams want scale but deploy for one-offs.

- L&D is under-represented. Only 22% of teams are in AI strategy discussions. Ethical readiness is low at 15%.

- The readiness gap is real. While 73% of L&D professionals use AI for basic tasks like drafting and ideation, only 28% feel confident integrating it into real learning workflows without quality degradation.

"AI has already helped HR and L&D move faster to accelerate content creation and streamline operations. But its real potential is far more transformative: enabling employees to build new capabilities that keep your business ahead of change and drive real value. Organizations that use AI to develop capability unlock a more adaptive, future-ready workforce. For that to happen, learning platforms must evolve; guiding, coaching, and adapting to how, when, and why people learn. As learning touches every role and function, now is the moment to rethink what learning means, and how it drives performance and growth.” - Cheryl Yuran, Absorb CHRO

What AI-powered L&D can actually look like

AI is a thinking partner that changes what’s possible at every stage of the learning cycle.

AI is transforming learning from a one-time event into a continuous, intelligent experience. It’s not about doing the same work faster, but about unlocking outcomes that were never achievable before. AI isn't a production tool you bolt onto existing workflows — it's a thinking partner that changes what's possible at every stage of the learning cycle. That means using it to diagnose problems with evidence, designing interventions with data, delivering learning in the flow of work, and measuring impact in business terms. The example below shows what that looks like when it's working.

An HRBP supporting the mid-market sales team comes to L&D to solve some urgent issues: The VP of Sales is noticing that deal-closing skills are weak and the pipeline is stalling. Here's how that plays out with and without AI.

| Without AI | With AI |

1. Diagnose the problem | You schedule interviews with three or four sales reps and a manager, manually review call recordings if you can get access, and synthesize notes into a needs analysis over a couple of weeks. By the time the diagnosis is done, the business has already moved on or lost patience. | You analyze CRM data, call transcripts, and existing performance reviews to identify specific deal-closing failure patterns within hours. The diagnosis arrives with evidence, not just observations, and is specific enough to design against immediately. |

2. Design the training intervention | You draft a learning design document from scratch, propose a half-day workshop because that's the familiar format, and spend several days aligning with the HRBP and sales manager on scope, outcomes, and what "good" looks like. Design assumptions are based on educated guesses. | You generate multiple intervention options — a three-module async series, a live practice-based workshop, a blended approach with spaced reinforcement — each mapped to a specific skill gap identified in the diagnosis. The HRBP sees concrete design options in the first conversation, not a week later. AI also drafts the success metrics upfront: deal velocity, win rate on mid-market accounts, manager observation scores. |

3. Develop the content | You could spend days on a single compliance course. From building a custom sales scenario with realistic deal dialogue, objection handling training, conducting SME interviews, manual scripting, and weeks of review cycles. You get the perfect course built, but the time it takes to get it up, it could go stale as soon as the product or market shifts. | You take deal transcripts, the company's sales methodology, and the ICP profile for mid-market accounts and run them through an AI tool. Within a few hours, you’ve got realistic deal-closing scenarios with branching dialogue, a facilitator guide, assessment questions, and a reinforcement email sequence. SME review time drops from weeks to a single focused session. When the pitch deck changes next quarter, the scenario updates in minutes. |

4. Deliver the content | You schedule training for a fixed date. Reps who joined after the session miss it. Those who attended forget most of it within a week. The facilitator prepares slide decks, exercises, and post-session summaries by hand for every cohort. Learning lives in the LMS, which reps visit once and never return to. | AI surfaces deal-closing practice modules directly in Salesforce at the moment a rep marks a deal as stalled. Spaced repetition nudges arrive in Teams timed to reinforce skills before high-stakes pipeline reviews. The facilitator's materials, activities, and session summaries are generated automatically for each new cohort. New reps onboarded three months later get the same experience without any additional L&D effort. |

5. Measure the impact | You send a post-training survey, get a 30% response rate, and report that 4.2/5 people "found it valuable." Connecting the training to actual deal outcomes requires pulling data from three systems, a manual VLOOKUP, and a spreadsheet that no one can find, and nobody knows how to use. Most ROI analysis gets abandoned before it's finished. | You continuously monitor deal velocity, win rates, and manager coaching scores for the cohort that completed training versus those who haven't. Thirty days post-training, you can show the HRBP a dashboard: mid-market close rates are up 18%, average deal cycle is shortened by six days, the top three objection types are declining in call transcripts. |

The difference between using AI in your learning workflow and not using it is more than speed. Several of these steps were functionally impossible before AI, regardless of how large your L&D team is.

When you're thinking about where AI fits in your own practice, it helps to think in three roles rather than one.

AI as diagnostic partner | Most L&D teams are still diagnosing problems through interviews and surveys — methods that are slow, anecdotal, and often politically shaped. AI lets you start with evidence: performance data, call transcripts, CRM signals, engagement patterns. That changes the conversation with stakeholders from "here's what we think the problem is" to "here's what the data shows." It also means you're solving the right problem, not the one that was easiest to articulate in a meeting. |

AI as delivery infrastructure | The default assumption in L&D is still that learning happens in a session — scheduled, attended, and then largely forgotten. AI makes it possible to deliver learning at the moment of need, inside the tools people already use, timed to when it will actually stick. That's not a feature of a better LMS. It's a fundamentally different model for how learning reaches people. |

AI as measurement engine | The reason L&D has historically struggled to demonstrate ROI isn't lack of effort — it's that connecting learning activity to business outcomes required data access, analytical capability, and time that most teams don't have. AI removes those barriers. When measurement becomes continuous and automatic, you stop reporting on what happened in training and start reporting on what changed in the business. |

These three roles map to where AI has the most impact and makes the entire learning process more intelligent, more responsive, and more connected to the outcomes that matter to the business.

Want to see how AI can build capabilities, close skills gaps and streamline your admin experience? Meet Absorb Aura.

The current state of AI in learning

Absorb surveyed 1,700+ learning professionals, and the data shows progress paired with hesitation. The data points in one direction: L&D is busy with AI, but not necessarily in the areas that are going to have the most business impact.

L&D is optimizing for the wrong goal

25% of L&D teams say their main reason for adopting AI is personalization at scale. Fewer than 4% are prioritizing business performance.

What it means: The emphasis is on learner experience rather than measurable outcomes, leaving a gap between what L&D wants and what executives expect.

Action for 2026: Shift focus from personalization as an end to personalization as a driver of measurable business outcomes. Develop frameworks that link learner experience improvements to performance metrics valued by executives.

"AI doesn't just personalize learning, it aligns it with business outcomes. And the organizations that will lead with it aren't the ones that started first, they're the ones that start with intention." — Leslie Kelley, Absorb's Chief Growth Officer

Stakeholder resistance is organizational, not technical

37% cite stakeholder resistance as their top challenge or concern when it comes to enabling AI in L&D, with only 12% pointing to a lack of internal expertise.

What it means: Adoption hurdles are organizational, not just technical. L&D can’t solve the hurdles alone without executive support.

Action for 2026: Build cross-functional coalitions with HR, IT, and executive leadership to foster buy-in. Create internal case studies and pilot programs that demonstrate tangible benefits of AI in learning to skeptical stakeholders.

Adoption is splitting into two camps, and the gap is becoming permanent

27% have been using AI for years, 46% have started recently and 27% still haven’t.

What it means: The market is splitting into early adopters and permanent laggards, rather than progressing on a gradual adoption curve.

Action for 2026: Launch awareness campaigns and low-risk pilot programs to engage lagging teams. Share success stories from early adopters to inspire confidence and momentum.

Teams are spending AI effort where it matters least

Most common: Content creation (30%) and research (21%).

Least common: Enhanced reporting (11%) and streamlined delivery (11%).

What it means: Teams want personalization that improves performance, but the most common uses of AI don’t deliver on that goal. Ambition and execution are misaligned.

Action for 2026: Invest in AI tools that support adaptive learning paths, real-time feedback, and intelligent delivery systems. Encourage experimentation with AI in coaching, mentoring, and performance support.

L&D is being locked out of the decisions that affect them most

Only 22% of L&D teams are included in AI strategy discussions.

What it means: Exclusion from decision-making creates a cycle where L&D is both blocked from experimenting and left out of setting direction.

Action for 2026: Advocate for L&D’s inclusion in enterprise AI governance. Position L&D as a strategic partner by aligning learning initiatives with broader digital transformation goals.

Ethical readiness is low, and the window to fix that is closing

Just 15% of learning professionals feel prepared to manage the ethical implications of AI in learning.

What it means: With AI shaping hiring, learning pathways, and performance evaluations, low readiness poses a significant risk.

Action for 2026: Develop ethical guidelines for AI use in learning, including transparency, bias mitigation, and data privacy. Train L&D teams on responsible AI practices and collaborate with compliance/legal teams to ensure safeguards are in place.

The risk isn't that L&D is ignoring AI, it's that most teams aren't engaging with it in the way their business needs. The teams pulling ahead have shifted from asking "How do we use AI in our work?" to "How do we use AI to become indispensable to the business?" That distinction is what separates the 22% who are in the room from the 78% who aren't.

The window for hesitation is closing, and it's closing fast. Early adopters are already showing what's possible, while organizations that are hesitating risk missing the moment entirely. Without alignment between goals, use cases, and strategy, many organizations will struggle to unlock AI's full impact.

Want more insights like this straight to your inbox? Subscribe to the All Things Learning newsletter.

The compounding advantage of AI: Why you can’t afford to wait

The acceleration curve with AI is like no other, because the technology is theoretically capable of implementing itself. Organizations that were slow to adopt in the past had decades to catch up.

Technology | Commercially viable in.. | Time to majority adoption.. |

Printing press | ≈1450 | ≈50-100 years |

Telegraph | 1844 | ≈25-30 years |

Computers | 1981 | ≈20 years |

Internet | 1993 | ≈12 years |

iPhone | 2007 | ≈8 years |

But with AI, the window isn't measured in decades or even years, it's measured in quarters.

Quarter 1: You automate content creation and save 100 hours

Quarter 2: You use those hours to build personalized pathways that improve completion by 40%

Quarter 3: Better completion drives measurable performance gains you can show executives

Quarter 4: Proven impact earns you budget to scale what works

Meanwhile, competitors who waited are still in Quarter 1, trying to get their first pilot approved. By year two, the gap becomes uncrossable. They're eight quarters behind in:

- Capability building

- Credibility with leadership

- Competitive positioning

- Team expertise

Scenario planning for 2027

IT sees AI as infrastructure, HR sees it as a talent initiative, and operations sees it as process optimization. They're not wrong, but there’s a bigger picture. AI transformation isn't a technology project, a hiring strategy, or a workflow upgrade — it's a capability-building challenge, and capability building is L&D's core competency.

L&D is uniquely positioned to lead because you:

Understand how people actually learn. You don’t simply deploy tools, you design learning experiences that stick — and knowing the difference between training that checks a box and learning that changes behavior.

Connect individual development to business outcomes. You understand the skills needed to get people from where they are to where the business needs them to be.

Influence the entire organization. If AI adoption needs to scale across thousands of people in dozens of functions, you already have the distribution network.

Speak both the language of learning and business. "Employees need to build AI literacy" becomes "we'll reduce time-to-productivity by 30% and increase retention in critical roles."

Take your seat at the table to ensure AI is implemented to its fullest potential

Your org's trajectory determines your reality next year. And the market is already splitting. 27% of L&D teams have been using AI for years, 46% have started recently, but 27% still haven't begun. What compounds the problem is that only 22% of L&D teams are included in AI strategy discussions at all. When learning teams are excluded from decision making, it creates a cycle, blocked from experimenting, left out of setting direction, and unable to prove value. Without better alignment between goals, use cases, and strategy, many organizations will struggle.

Which scenario is your team heading toward?

Scenario one: Your team waits for perfect clarity | Scenario two: You adopt AI tools but never move beyond efficiency plays | Scenario three: Your team run pilots, prove value, and earn trust |

By 2027:

| By 2027

| By 2027

|

This happens if:

You continue to debate whether to adopt AI while others are scaling what works. | This happens if:

You use AI to optimize the status quo instead of reimagining what is possible. | Get this result:

Start now, run experiments, fail fast, document learnings, build credibility one pilot at a time. |

Your playbook to getting to scenario 3

Most L&D professionals feel comfortable with drafting outlines, generating quiz questions, and brainstorming examples. The numbers show that's where most teams are stuck — and it's not enough. Follow this playbook to move past surface-level AI use and toward the strategic impact that allows you to reimagine your entire learning experience and impact business outcomes.

Stage one: Get your first win (Your Q1 — start here regardless of where you are)

If you're among the 46% who have recently started, or the 27% who haven't yet, this is your entry point. If you're already here, use this stage to sharpen what you measure and who you involve.

Start so small that failure is cheap. The goal isn’t perfection, it’s proof that earns you permission to do more.

Start with your own work first

Before deploying AI for learners, use it to enhance your own workflows:

- Planning and project management. Use AI to organize initiatives, track dependencies, and generate timelines.

- Research and synthesis. Scan industry trends, summarize reports, and identify patterns faster.

- Content refinement. Draft outlines, rewrite for clarity, and generate multiple variations.

- Administrative tasks. Create closed caption files, generate infographics, and automate documentation.

Build practical AI literacy through daily use, not theory. By using AI daily in your own work, you learn where it excels and where it fails. It’s knowledge you can’t get from a course, plus, you develop the critical eye needed to review AI outputs effectively.

Pick one high-impact, low-barrier use case

The data shows content creation is where most teams start — and that's a reasonable entry point, as long as you treat it as a stepping stone, not a destination. Best starting points:

- Content creation. Use AI to draft course outlines, generate quiz questions, or create scenario-based training. Course authoring tools or Claude can reduce development time by 50-70%.

- Personalized learning paths. Platforms like Absorb use AI to tailor journeys based on learner behavior, fast-tracking some while providing extra support where needed.

- Just-in-time support. Deploy AI chatbots that surface relevant resources when learners need help, embedded directly in workflow tools.

- Research and curation. Use AI to scan industry trends, synthesize reports, and identify skill gaps faster than manual methods.

Tie results to metrics executives already track

Don’t measure completion rates. Measure:

- Time saved (hours reclaimed for high-value work)

- Revenue impact (sales performance, customer retention)

- Time-to-competence (how quickly new hires reach productivity)

- Cost reduction (less reliance on external vendors)

Go from measuring learning activity to measuring business influence, with a coherent plan for what to collect, how to connect it, and when to act on it.

Involve skeptics in pilot design

Remember: 37% of teams cite stakeholder resistance as their top challenge. The most effective way to neutralize that resistance is to make skeptics co-owners of success. Ask: "What would success look like to you?" Then build the pilot to deliver exactly that. When skeptics help define success criteria, they become advocates.

Stage two: Build your three key pillars (Your Q2 and Q3 — where efficiency becomes impact)

Your first win does something more valuable than save time; it shows you where AI moves the needle for your team, which problems it solves cleanly, which ones still need human judgment, and what your stakeholders respond to. Use those reclaimed hours to build something that changes outcomes, not just throughput.

The mistake most teams make at this point is trying to scale everything at once. Instead, use what you learned in Stage one to deliberately expand into areas with the highest leverage for your organization.

Most teams find those high-leverage areas cluster around three questions:

- Are we working on the right things? (Strategy)

- Are we building the right content? (Learning design)

- Are we running our operations efficiently? (Learning operations)

You probably already have a sense of which one is your biggest constraint. Start there and bring the same "small pilot, prove value" discipline, just applied to a bigger problem. Once you have proof, expand strategically. This is where you transition from isolated wins to systematic transformation.

We’ve seen successful teams organize their efforts around these three pillars:

Pillar one: AI in learning strategy

This pillar directly addresses one of the most striking findings in the data: only 22% of L&D teams are included in AI strategy discussions. The reason so many are excluded is that they haven't yet connected learning activity to business outcomes in a language executives recognize. That's what this pillar builds.

Learning professionals are eager to play a more strategic role but often lack the tools and data to do so. Predictive insights will give L&D a seat at the table. You should know which skills will matter six months from now. How learning translates to revenue. Where training moves performance metrics. AI shifts L&D from a support function to a business driver.

- Forecast what's coming. Use AI to forecast skill gaps before they cost you. Map emerging roles and the capabilities they’ll demand. Prioritize training where it matters most.

- Prove the connection. Link LMS data to performance metrics like sales, retention, and productivity gains. Show how product training drives quota attainment.

- Cut time to competency. Intelligent curation and adaptive pathways speed up onboarding. Get new hires productive faster and existing employees upskilled sooner.

What this looks like in practice: A professional services firm stops scheduling annual training cycles and builds a continuous upskilling engine instead. Client engagement data, project outcomes, and individual performance signals feed a system that identifies capability gaps before they affect delivery. When a new regulation lands or a market shift changes client demand, updated guidance reaches the relevant teams within days, not quarters.

- Leaders define the capabilities the firm needs to compete.

- AI identifies who needs what and when, and delivers it without disrupting billable time.

- L&D shifts from managing calendars to managing capability, ensuring an organization's most valuable assets — people — stay ahead of what clients need next.

The firm isn't reporting on training completion. It's reporting on capability readiness against live business demand. That's what earning a strategic seat looks like.

"Completion was never the goal. Performance always was. Now AI can close the gap between the two, and L&D will never look the same." — Obaidur Rashid, Absorb's Chief Technology Officer

Pillar two: AI in learning design

This pillar addresses the ambition/execution gap the data surfaces: teams want personalization that improves performance, but most AI use is concentrated in content creation and research — early-stage tasks that don't yet close the loop on outcomes. This is how you bridge that gap.

It’s ambitious to design learning that feels relevant to every employee. Requests can pile up faster than teams can respond, leaving little time for reflection. What’s missing is visibility, like data on what learners need, how content performs, and where skills are slipping. AI lightens the production load while supporting smarter content creation and more inclusive design.

- Understand learner needs at scale. Natural language processing scans feedback and performance data to spot skill gaps in real time.

- Curate content creation. Generative tools draft outlines, quizzes, and microlearning modules that experts refine. For example, co-develop scenario-based compliance training in hours, not weeks.

- Create adaptive learning pathways. Systems adjust difficulty based on learner progress. Platforms like Absorb automatically personalize learning journeys.

- Increase accessibility and inclusion. AI auto-generates transcripts, translations, and alternative formats at scale, making learning accessible globally.

Critical: AI as design assistant, not author

The most common mistake is treating AI as a content creator rather than a design assistant. Successful teams follow this process:

- Generate: Use AI to create initial drafts, variations, examples.

- Validate: Critically review for accuracy, bias, pedagogical soundness.

- Refine: Edit to match brand voice, learner context, learning objectives.

- Own: Take full responsibility for the output. It’s yours, not the AI's.

This approach maintains quality while capturing efficiency gains. The goal is to spend less time on initial drafts and more time on the strategic work of ensuring content drives outcomes.

What this looks like in practice: A hospital network replaces separate onboarding and training programs with a single capability platform spanning all sites. New nurses receive tailored pathways based on role, experience, and live performance signals collected from clinical systems. The platform identifies the capabilities that will most improve outcomes each quarter and automatically assigns targeted microlearning and simulations to specific units.

- Managers track progress through real-time capability dashboards.

- L&D becomes part of the system itself, steadily increasing workforce capability across the entire network rather than delivering isolated programs.

The design work didn't get simpler, it got smarter. L&D isn't producing more courses, its building the infrastructure that continuously matches content to the specific learner, moment, and performance signal that makes it land.

"AI comes in as a collaborative co-worker that can support content creation, personalize learning experiences and reduce the burden on L&D teams." Leslie Kelley, Absorb's Chief Growth Officer

Pillar three: AI in learning operations

This pillar tackles the least-adopted AI uses in the data — enhanced reporting and streamlined delivery. These aren't glamorous, but they're where operational drag lives. Fixing them is what frees up the capacity to do everything else in this playbook.

Behind every great learning program is a lot of behind-the-scenes work. The challenge is that most of it still feels slow and repetitive. AI changes that by automating tasks, surfacing insights, and keeping operations efficient.

- Automate administrative tasks .Handle compliance reminders, enrollment, and reporting automatically, cutting manual follow-up.

- Optimize learning platforms. AI reviews usage data and recommends improvements. For example, it identifies low-engagement modules and suggests design changes.

- Scale delivery. AI-driven translation and localization enable seamless global rollouts.

- Get predictive analytics. Anticipate training needs, resource demands, and learner drop-off. Flag at-risk learners early and trigger interventions.

What this looks like in practice: Instead of producing separate maintenance courses, a manufacturer builds an AI-supported capability ecosystem. Machine data, manuals, and technician performance feed an engine that creates micro-lessons and updated guidance automatically. When a fault pattern appears, the system refreshes instructions and sends them to the technicians who need them.

- Leaders define the outcomes they want — faster diagnosis, reduced downtime.

- AI handles production and delivery.

- L&D ensures skills reach the right people at the right moment, creating continuous upskilling across all plants.

No enrollment cycles. No course refresh projects. No lag between a problem appearing on the floor and the guidance reaching the person who needs it. Operations stops being the part of L&D that slows everything down and becomes the engine that keeps capability current at scale.

A note on ethical readiness across all three pillars

The data shows only 15% of learning professionals feel prepared to manage the ethical implications of AI. As you build out these pillars (particularly where AI informs decisions about who gets development opportunities, and where AI shapes content and pathways), ethical safeguards aren't optional. At this stage, establish your team's guidelines for transparency, bias review, and data privacy, bringing compliance and legal in as partners, not gatekeepers. Getting ahead of this now prevents it from becoming a blocker at Stage 3.

Stage three: Scale and lead (Your Q4 and beyond — from proven impact to strategic authority)

Once you have proof and a foundation, it's time to lead. If you're here, you've done what only 22% of L&D teams have managed: you're in the room. Now the goal is to stay there and expand your influence.

- Claim your seat at the strategy table. You've proven capability. Now frame L&D as an AI implementation partner to shape strategy, not just execute it.

- Stop optimizing, start transforming. Efficiency was the first phase. Now reimagine how learning drives revenue, retention, and competitive advantage.

- Explore agentic AI. The next wave involves autonomous agents that plan, execute, and iterate. Position L&D to orchestrate, not just administer.

What transformation actually looks like: A technology company replaces its fragmented customer onboarding process with a single enablement platform spanning every product line and market. Now new customers receive tailored learning pathways based on their industry, use case, and where they are in the adoption journey. And the platform tracks engagement and product usage signals to identify where customers are struggling and automatically surfaces the right guidance at the right moment — before they raise a support ticket or disengage.

- Leaders define the customer outcomes that drive revenue growth.

- Customer success teams see real-time visibility into enablement progress and product adoption across their entire portfolio.

- L&D stops producing one-size-fits-all onboarding content and starts building the infrastructure that turns new customers into confident, capable ones — faster and at scale.

L&D's new job looks fundamentally different now. You're no longer building better employees, you're creating better business outcomes. When capability building points at customers, retention, and competitive position instead of just the workforce, L&D takes on a new role: profitable growth.

"When AI handles the heavy lifting, L&D teams can focus on what truly matters — strategic, impactful learning." Craig Basford, Absorb's EVP, Product

Getting to “Yes”: Pre-empt objections to AI with proof

AI adoption can be complex, messy, and requires sustained intentional effort. Organizations that succeed may be better resourced, but they're also more deliberate about addressing what actually blocks progress and dedicated to finding solutions.

The good news? The barriers are predictable. We see the same four objections show up across organizations, regardless of size or industry. The difference between teams that move forward and teams that stay stuck isn't whether they face resistance; it's whether they address it with proof or more promises.

What intentional adoption looks like:

- Intentional ≠ slow: Moving with intention doesn't mean building the perfect strategy before taking action. It means being deliberate about what you pilot, how you measure it, and how you use early wins to build momentum. Fast, focused experiments beat slow, comprehensive plans.

- Intentional = evidence-based: Every assumption gets tested. Every objection gets answered with data, not arguments. "Will stakeholders support this?" becomes "Let's run a two-week pilot and find out."

- Intentional = iterative: You don't need to get everything right on the first try. You need to learn fast, adjust, and compound small wins into bigger capabilities. Teams that succeed with AI treat adoption as a continuous learning process, not a one-time implementation.

The reality: AI won’t replace L&D professionals. But L&D professionals who use AI thoughtfully will replace those who don’t, and those who rely on AI without developing core skills will struggle to add strategic value.

The barriers you'll face when implementing AI, and how to move past them

"We can't connect learning to business impact" | "We don't have the bandwidth to experiment with AI" | "We're not sure AI output is good enough to trust" |

What's really happening: The data exists — it's just siloed. LMS, HRIS, CRM, and performance systems don't talk to each other, and most L&D teams can generate reports but can't translate them into decisions that executives care about. Deloitte found 95% of L&D organizations don't excel at using data to align learning with business objectives, and 69% lack the skills to ask the right questions linking learning to business results. | What's really happening: You're not wrong — the pressure is real. The 2025 L&D Global Sentiment Survey found "do more with less" was the dominant theme, and 40% of L&D professionals say their colleagues are too busy to use new AI tools effectively. But the teams waiting for capacity to free up before experimenting are the ones falling furthest behind. AI is how you get the bandwidth back, not something you adopt after you have it. | What's really happening: Skepticism about AI quality is rational and growing. Among developers, trust in AI accuracy dropped from 40% to 29% in a single year,even as usage climbed. The core frustration: outputs that are almost right but subtly wrong, and fixing them takes longer than starting from scratch. In L&D, the stakes are higher: a course that's pedagogically off by 20% can actively undermine the behavior change it was designed to produce. |

How to address it:

| How to address it:

| How to address it:

|

What won’t work: Waiting for a perfect data infrastructure before connecting learning to outcomes. You don't need a unified data lake — you need one clean line between one learning program, and one business result. | What won’t work: Waiting until the team has more capacity. That moment doesn't arrive on its own — it has to be created, and AI-assisted content development, admin automation, and learner support are three of the fastest ways to create it. | What won’t work: Either extreme — dismissing AI output as too unreliable to touch, or publishing it without instructional review. The L&D teams that get this right treat AI like a talented but junior instructional designer: high output, needs an experienced eye before anything goes to learners. |

Reality check: At Absorb, we didn’t build our AI expertise overnight. Our solution? Start with one or two AI-experienced team members, supported by colleagues with deep product knowledge or excellent communication skills. Build everyone’s AI capability through daily practice:

- Spend 30 minutes daily using AI tools (Absorb Aura, Copilot)

- Join AI communities (LinkedIn groups, Slack channels)

- Partner with teams already using AI (IT, marketing, customer service)

- Create AI projects or experiment with ways to use AI in their current jobs

- Learn out loud, share experiments and findings in Town halls and All-hands meetings

Learning as an engine for change: AI-powered, human-centered

A manufacturer's technicians receive updated guidance the moment a fault pattern emerges. A hospital's nurses build capability continuously, without a single discrete training event. A professional services firm spots a capability gap before it affects client engagement. A technology company's customers become confident and capable before they ever need to raise a support ticket.

None of these organizations is running L&D the way it used to work.

Here's what that shift looks like at the level of the whole function; where L&D has been, where it can go, and why closing that gap is critical to your business.

From content factory to capability architect — The manufacturer didn't commission a new maintenance course when fault patterns changed, the system updated itself. The technology company didn't produce a new onboarding deck for every product line, it built a platform that adapts to every customer. That's the shift: architecting ecosystems where AI handles production and delivery while leaders focus on ensuring the right capabilities reach the right people at the right time.

From reactive service function to proactive strategic partner — The professional services firm isn't waiting to be briefed on the next strategic move, L&D already knows what capabilities that move will require, and it's building them. L&D isn't responding to other people's priorities anymore, it's shaping them. When executives discuss strategic initiatives, L&D is in the room asking, "what capabilities do we need to build for this to succeed?", and having confident, credible answers.

From administrative overhead to competitive advantage — While competitors are still onboarding customers manually and reactively, the technology company is compounding an advantage that gets harder to close every quarter. While competitors struggle to adopt AI because their workforce isn't ready, organizations with strategic L&D move fast because the foundation is already built. That speed compounds into market position.

From siloed function to enterprise capability engine — The hospital network didn't run a training program. It built infrastructure that made every nurse measurably more capable. L&D stops being a function and becomes the thing that makes every employee more capable, every quarter, across the entire organization.

But what shouldn’t change?

AI transforms how L&D operates, but don’t forget what L&D uniquely brings to the table:

- Human judgment about what capabilities matter and why instructional design expertise that ensures learning sticks

- Empathy and context that AI can't replicate

- Strategic thinking about how learning connects to business outcomes

- Trust and relationships that make change management possible

AI handles the scale, the speed, the personalization, and the repetitive work. You provide judgment, context, strategy, and human connection.

Strategic, AI-enabled learning that transforms capability and performance

If L&D doesn't step up, here's what happens:

- IT leads AI adoption: They'll focus on technical implementation, not behavioral change. Rollout might happens fast, but adoption will be shallow.

- HR leads AI adoption: They'll focus on talent implications; hiring for AI skills and restructuring roles. But who's building AI capability in your current workforce? Who's helping 10,000 employees who aren't going anywhere learn to work differently?

- Operations leads AI adoption: They'll optimize their own functions first. Sales ops builds sales enablement. Customer service builds their own training. Marketing builds theirs. L&D gets fragmented into a service provider for other people's initiatives, never shaping strategy.

But when L&D leads, you become the function that enables enterprise-wide transformation. You're not supporting someone else's AI initiative, you're driving organizational capability at scale.

This is the most significant opportunity L&D has had in a generation to shift from cost center to strategic driver. The question is whether you'll seize it. Our survey data shows most teams aren't positioned to lead yet, but the gap between where you are and where you need to be is closable.

The foundation is being built now. Organizations that position L&D in their AI strategy will unlock:

- Hyper-personalized learning. Systems that understand individual context and adapt in real time as needs evolve.

- Fully integrated experiences. Learning embedded into the tools teams already use — effortless, not forced.

- Outcome-driven measurement. Direct connections between learning and performance impact. The questions executives have asked for years, finally answered.

- Compounding intelligence. AI gets smarter with every interaction, building organizational capability over time.

Position L&D to build a learning ecosystem that’s:

- Contextual: Learning is delivered when and where it's needed, not on a predetermined schedule.

- Skills-based: Learning connects to capabilities that drive business outcomes, not generic competencies.

- Outcome-driven: Learning is measured by performance impact, not completion rates.

- Built in, not bolted on: Learning is integrated into daily work, not separate from it.

The window is open, you have your playbook, the next move is yours.

FAQs

How can we use AI to connect personalized learning to business outcomes?

AI personalization connects to business outcomes when built around role requirements and performance signals, not learner preferences. Personalizing for engagement optimizes for a different goal than closing capability gaps that are costing the business. The connection happens when learning paths are configured against job roles and business objectives, so reporting traces development to retention, productivity, or revenue rather than completion rates. Absorb Skills structures every course against a skill, every skill against a job role, and every role against a tangible outcome, giving talent managers, L&D directors, and HR business partners, and people managers at distributed enterprises a measurement foundation built for business impact.

How can we use AI to link learning to business performance metrics?

The mechanism connecting learning to business metrics is data integration, not better reporting. LMS completion data, CRM performance records, and operational metrics traditionally sat in separate systems. When they’re connected, AI can identify correlations between learning activity and performance outcomes, surfacing them in formats executives recognise. The practical result is showing that employees who completed specific training outperformed those who did not on metrics the business already tracks. For revenue and growth leaders at enterprises running multiple business systems, Absorb Analyze enables training and performance data to flow alongside the metrics that matter to them.

How can we use AI to forecast skill gaps before they become a problem?

AI can forecast skill gaps by continuously analyzing performance signals, role requirements, and learner progression data, identifying shortfalls before they affect delivery or attrition. Traditional skills gap analysis is retrospective: a problem surfaces, a program is commissioned, and months pass. AI makes identification continuous and proactive. Absorb Skills assesses employees against 12,000 skills and nearly 200 competencies mapped against 1,600 job roles. Absorb's State of Upskilling report found only 10% of organizations currently respond to skill gaps when new business needs arise, but with Absorb Skills organizations can turn that reactive pattern into proactive action.

How can we make sure we’re using AI in learning responsibly?

Four areas require explicit policy before AI is deployed in a learning environment: learner data governance, algorithmic transparency, bias mitigation in content and pathways, and human oversight of development decisions. Learners should understand when AI shapes their experience. AI-generated content should be reviewed before deployment, particularly where it influences development opportunities. High-stakes decisions affecting who receives development, whether that is a new hire in a regulated role, a frontline worker in a safety-critical environment, or a senior individual contributor being considered for a leadership pathway, should retain human review. Getting ahead of these four areas before deployment is significantly easier than managing the consequences after.

How can we use AI to surface learning at the moment of need?

AI changes learning delivery by pushing relevant content based on signals from a learner's work context rather than waiting for them to visit a platform and self-enroll. The biggest barrier to learning in the flow of work is friction. Every additional step between recognizing a knowledge gap and accessing a resource reduces the likelihood of engagement. AI-powered search understands intent rather than just matching keywords. Absorb Aura pulls answers from assigned training without requiring anyone to reopen a course.

How are leading L&D teams using AI to prove real business impact, not just faster content creation?

Leading L&D teams are shifting from measuring learning activity to measuring business influence. Rather than reporting course completions, they are connecting training interventions to the metrics executives already track: time-to-productivity for new hires, win rates for account executives, incident reduction for safety officers, and retention rates for people managers. The shift requires two things: clear upfront agreement on which business metric a learning intervention should move, and the data infrastructure to track it. Teams that have made this shift consistently report stronger stakeholder relationships, larger budgets, and inclusion in strategy conversations they were previously excluded from.

How does Absorb Aura help L&D teams move from AI hype to scaled, visible impact?

Most AI tools help L&D teams work faster, Absorb Aura is designed to change what L&D teams can demonstrate. For L&D directors, learning operations managers, and HR business partners at enterprise organizations, Aura's agent team handles content creation, learner support, admin queries, and skills coaching simultaneously, freeing instructional designers, enablement leads, and talent managers to focus on the strategic work that earns executive visibility. Aura connects learner activity to performance signals in real time, so the impact of learning on nurse readiness, engineer certification, or sales rep ramp time becomes reportable rather than assumed.

What metrics are high-performing L&D teams using to show AI impact to executives?

High-performing L&D teams have moved away from completion rates and satisfaction scores as primary evidence of impact. The metrics that resonate with CFOs, COOs, and CHROs are time-to-competency for new hires and career movers, internal mobility rates showing talent development is working, error and incident reduction for frontline workers and technicians in safety-critical roles, revenue per rep or quota attainment for sales and account teams, and support ticket reduction for customer success and service functions. These metrics connect learning investment to outcomes the business is already measuring, making the ROI case without requiring executives to learn a new reporting language.

.png)